This article is the fourth in an editorial series with a goal of directing line of business leaders in conjunction with enterprise technologists with a focus on opportunities for retailers and how Dell can help them get started. The guide also will serve as a resource for retailers that are farther along the big data path and have more advanced technology requirements.

In the last article, we focused on how big data can support the increasingly valuable 360 view of the customer. The complete insideBIGDATA Guide to Retail is available for download from the insideBIGDATA White Paper Library.

Retail Gains with Distributed Systems

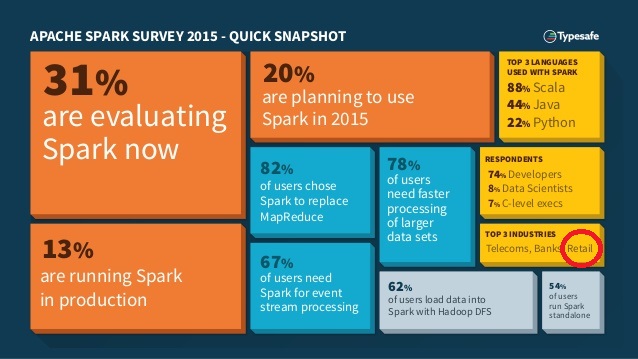

To compete in the age of the Internet storefront, large retailers are working to extend their early lead in adopting big data technology by utilizing scalable data management systems that integrate online and offline data so they can better understand their customers and improve the efficiency of their operations. In particular, retailers need to connect and process data in many formats from disparate systems and sources, including the social media sites that consumers interact with. Many retailers are exploring the opportunities surrounding the dominant entries in the distributed processing architectures: Apache Hadoop and Spark and are looking for ways to get started with these architectures. Even though Hadoop has been in the market since 2011, it is still a relatively new technology. Spark, on the other hand, can be considered the future of distributed processing architectures—a needs-based solution, i.e. if there is a need, a retailer will come up to speed very quickly to take advantage of the technology available.

Apache Hadoop

The Hadoop data storage and processing system offers compelling benefits for retail organizations that want to extract value out of huge amounts of

structured, unstructured and semi-structured data. With Hadoop, you can use and store any kind of data, from any source, in native format, and perform a wide variety of analyses and transformations on that data.

The seeds of Hadoop began with Doug Cutting and Mike Cafarella in 2002 with their Nutch project. In 2006, Cutting went to work at Yahoo and morphed the storage and processing parts of Nutch into Hadoop (named after Cutting’s son’s stuffed elephant) to capture and analyze the massive amounts of data generated by the company. Today, retail organizations can leverage the experience of these digital leaders by deploying the same platform in the retail environment. Even better, Hadoop allows you to start small and scale your solution to terabytes of data, or even petabytes, inexpensively.

Big Data technologies such as Hadoop (an opensource programming framework that supports the processing of large data sets in a distributed computing environment) are ideally suited to collecting and analyzing unstructured data types like the many uses in retail including web logs that show the movements of every customer though an online storefront. This data can then be combined with existing business intelligence and sales data to provide new insights.

Hadoop allows your organization to store petabytes, and even exabytes, of data cost-effectively. As the amount of data in a cluster grows, you can add

new servers with local storage incrementally and inexpensively. Thanks to the way Hadoop takes advantage of the parallel processing power of the servers in the cluster, a 100-node Hadoop instance can answer questions on 100 terabytes of data just as quickly as a 10-node instance can answer questions on 10 terabytes.

Both robust and reliable, Hadoop handles hardware and system failures automatically, without losing data or interrupting data analyses. Better still, Hadoop runs on clusters of industry-standard servers. Each has local CPU and storage resources, and each has the flexibility to be configured with the proper balance of CPU, memory, and drive capacity to meet your specific performance needs.

Ultimately, Hadoop makes it possible to conduct the types of analysis that would be impractical or even impossible using virtually any other database or data warehouse. Along the way, Hadoop helps your retail organization reduce costs and extract more value from your data.

Apache Spark

Spark is the latest distributed compute framework to come out to serve the big data community. Spark compliments Hadoop on many levels, but they do not perform exactly the same tasks. Most environments will support both and they are often used together. Spark is reported to process up to 100 times faster than Hadoop in certain circumstances, but it does not provide its own distributed storage system. Many big data projects involve installing Spark on top of Hadoop, where Spark’s advanced analytics applications can make use of data stored using the Hadoop Distributed File System (HDFS).

Distributed storage like HDFS is fundamental to big data deployments as it allows vast multi-petabyte data sets to be stored across many servers with

direct attached storage, rather than involving costly custom hardware which would hold it all on one device. These systems are scalable, meaning that

more drives can be added to the network as the dataset grows in size.

Spark has the edge over Hadoop in terms of speed and usability. Spark handles most of its computation “in-memory”—copying them from the distributed physical storage into far faster logical RAM memory and the ability to execute complex workflows. This reduces the amount of time consumed writing and reading to and from slow, high-latency mechanical hard drives that needs to be done under Hadoop’s MapReduce system.

MapReduce writes all of the data back to the physical storage medium after each operation. This was originally done to ensure a full recovery could be made in case something goes wrong—as data held electronically in RAM is more volatile than that stored magnetically on disks. However Spark arranges data in what are known as Resilient Distributed Datasets, which can be recovered following failure.

Spark’s functionality for handling advanced data processing tasks such as near real-time stream processing and machine learning is way ahead of what is possible with Hadoop alone. Spark streaming is probably the hottest thing in the big data world right now because of the demand for “near real-time” analytics. With Spark-based near real-time analytics—retailers are looking for immediate response to customer needs for efficient recommendation engine processing. Realtime processing means that data can be fed into an analytical application the moment it is captured, and insights immediately fed back to the user through a dashboard, to allow action to be taken. This allows customer to process and analyze data in a short window of time on a continuous basis.

If you prefer, the complete insideBIGDATA Guide to Retail is available for download in PDF from the insideBIGDATA White Paper Library, courtesy of Dell and Intel.

Speak Your Mind