In this video from the 2016 Stanford HPC Conference, DK Panda from Ohio State University presents: Best Practices – Big Data Acceleration.

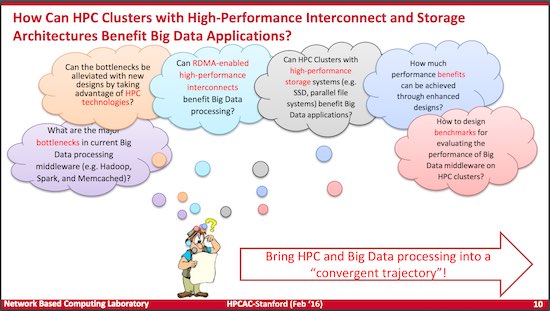

“This talk will provide an overview of challenges in accelerating Hadoop, Spark and Memcached on modern HPC clusters. An overview of RDMA-based designs for multiple components of Hadoop (HDFS, MapReduce, RPC and HBase), Spark, and Memcached will be presented. Enhanced designs for these components to exploit in-memory technology and parallel file systems (such as Lustre) will be presented. Benefits of these designs on various cluster configurations using the publicly available RDMA-enabled packages from the OSU HiBD project (http://hibd.cse.ohio-state.edu) will be shown.”

DK Panda is a Professor and University Distinguished Scholar of Computer Science and Engineering at the Ohio State University. He has published over 350 papers in the area of high-end computing and networking. The MVAPICH2 libraries with support for MPI and PGAS on IB, iWARP, RoCE, GPGPUs, Xeon Phis and virtualization (http://mvapich.cse.ohio-state.edu), are currently being used by more than 2,525 organizations worldwide (in 75 countries). More than 348,000 downloads of these libraries have taken place from the project’s site. These libraries are empowering several InfiniBand clusters (including the 10th, 13th and 25th ranked ones) in the TOP500 list. The RDMA packages for Apache Hadoop, Apache Spark and Memcached together with OSU HiBD benchmarks from his group (http://hibd.cse.ohio-state.edu) are also publicly available. These packages are currently being used by more than 145 organizations from 20 countries. More than 14,800 downloads of these packages have taken place from the project’s site. He is also an IEEE Fellow.

Speak Your Mind