I was very pleased to attend the GPU Technology Conference 2016 as the guest of host company NVIDIA on April 4-7 in Silicon Valley. I was impressed enough with the experience that I wanted to write this Field Report to give readers an in-depth perspective for what I saw.

I didn’t know much about NVIDIA going in, so I was eager to learn what the company brought to the table for insideBIGDATA’s coverage areas. I was delighted to find that the overarching theme of the show was “deep learning” and all the underlying technologies and applications. It was clear that NVIDIA is “all in” for deep learning and its pursuit of becoming the one generalized algorithm where domain experts aren’t needed for achieving superhuman results.

For readers not yet up to speed with deep learning, here is a brand new book that’s in a pre-publication stage by MIT Press. It is made available online for free – Deep Learning by Ian Goodfellow, Yoshua Bengio and Aaron Courville.

I found the conference to be very well organized and I believe well-appreciated by the 5,000+ attendees. The event was held at the now very familiar to me – San Jose Convention Center. I like this venue in terms of its ability to absorb just about any size of conference, and especially its integration with the adjacent San Jose Marriot hotel (you can totally avoid going outside if you’re staying at the hotel and attending the conference, a big plus during those sweltering summer months).

I arrived just in time for an evening press reception at the Westin Hotel across the street. The energy of my fellow press attendees was evident, so I was looking forward to the next full day of activities. I also got to take in the Posters and Beer Reception in the long hallways of the convention center. I really enjoyed this feature of the show because research posters reminded me of my days in academia where grad students would prepare elaborate posters to summarize their work and stand around to explain the highlights of their research to interested parties. My favorite group of posters was from the astrophysics researchers who use GPU (graphics processing unit) technology in lieu of supercomputers to perform calculations. In a previous life, I used machine learning techniques in the data analysis phase of a major astrophysics project, so this really resonated with me. I spoke at length to one group of undergrads from the Tarleton State University Particle Modeling Group who presented their research on “N-body Simulation of Binary Star Mass Transfer Using NVIDIA GPUs.” What a great group of smart, young scientists! I was very impressed.

The next day was the main keynote of the conference presented by NVIDIA CEO Jen-Hsun Huang. It took place in the cavernous main hall where it seemed like all attendees were present. I got to sit in the designated “press only” area located front-center of the stage, complete with tables and power outlets to encourage more efficient journalistic duties. This was a nice touch because I’ve been to conferences where there was no press area at all, making my job a lot harder.

The next day was the main keynote of the conference presented by NVIDIA CEO Jen-Hsun Huang. It took place in the cavernous main hall where it seemed like all attendees were present. I got to sit in the designated “press only” area located front-center of the stage, complete with tables and power outlets to encourage more efficient journalistic duties. This was a nice touch because I’ve been to conferences where there was no press area at all, making my job a lot harder.

Jen-Hsun’s mammoth 2 hour talk was overwhelming! There were so many substantial announcements, I could hardly contain my enthusiasm to take it all in. I’ve been at conferences where the host company stretches the importance of announcements, but this was not the case with NVIDIA. Each announcement was significant (itemized later in this report). While witnessing the main keynote, I soon got the impression for how important NVIDIA is to the accelerating field of deep learning. Jen-Hsun made the point that although supervised learning is important, the vast majority of the world is not labeled, and large companies like Facebook are investing heavily in unsupervised learning. As a data scientist myself, I got quite excited for all the paths of research I was planning to investigate after the show while using the conference presentations as a roadmap. Since returning, I’ve already done a deep dive into GPU technology and how it can help me with doing data science and machine learning. Thanks to NVIDIA for this new perspective!

I also found some good tech vibe in the exhibits hall. The night before, in my hotel room, I prepared a short list of vendor booths to visit. I was able to have substantive chats with each company. I’ve listed my favorites below, along with a brief summary of what I learned:

- Agrima Infotech – (India) deep learning based cloud platform offers

respective computer vision, natural language processing and Big Data analytics. - Altoros – (Sunnyvale) TensorFlow as a service for data science teams.

- Baidu Research – (Sunnyvale) brings together top researchers, scientists and engineers from around the world to work on fundamental AI research including Dr. Andrew Ng, a deep learning maverick.

- Blazegraph – (Washington, DC) provider of highly scalable software for solving complex graph and machine learning algorithms. Blazegraph Database 2.1.0.

- Bright Computing – (San Jose) provider of cluster and cloud management software.

- Conservation Metrics – (Santa Cruz) combines recent advances in sensor hardware technology with GPU accelerated Deep Learning and cutting edge analytical tools to facilitate data-driven approaches to conservation.

DeepArt – (Germany) uses deep neural network to turn photos into art. Try their free demo (see inset photo of me using van Gogh theme!)

DeepArt – (Germany) uses deep neural network to turn photos into art. Try their free demo (see inset photo of me using van Gogh theme!)- GPUdb – (San Francisco) GPU accelerated distributed database with analytical processing and visualization support that handles high velocity Big Data feeds.

- J!Quant – (Brazil) an algorithm-based venture builder.

- MapD – (San Francisco) big data analytics platform that queries and visualizes big data up to 100x faster than other systems. MapD technology leverages the massive parallelism of commodity GPUs to execute SQL queries over multi-billion row datasets with millisecond response times and visualizes the results using the rendering capabilities of the GPUs.

- MathWorks (Sunnyvale) leading developer of mathematical computing software including MATLAB (I used the open source equivalent Octave for years before moving to R).

- Onu Technology (San Jose) cutting-edge machine intelligence solutions can let you easily, quickly, and elegantly solve challenges in big data analysis, graph computation, computer vision, NLP, and more.

- Real Life Analytics (London) uses AI to measure and maximize ROI in the digital signage and out of home advertising industry. I never knew that digital ads were looking back at me!

- Twitter Cortex (San Francisco) a team of engineers, data scientists, and machine learning researchers dedicated to building a unifying representation of all of the users and content on Twitter. I saw a cool demo of real-time classification of videos.

- Yahoo Research (Sunnyvale) a group of top-tier researchers working on applications of deep learning using GPUs.

As AI sweeps across the technology landscape, NVIDIA unveiled a series of new products and technologies focused on deep learning, virtual reality and self-driving cars. Over the course the CEO’s keynote, he described the current state of AI, pointing to a wide range of ways it’s being deployed. He noted more than 20 cloud-services giants — from Alibaba to Yelp, and Amazon to Twitter — that generate vast amounts of data in their hyperscale data centers and use NVIDIA GPUs for tasks such as photo processing, speech recognition and photo classification. Here is a summary of all the NVIDIA announcements mentioned:

As AI sweeps across the technology landscape, NVIDIA unveiled a series of new products and technologies focused on deep learning, virtual reality and self-driving cars. Over the course the CEO’s keynote, he described the current state of AI, pointing to a wide range of ways it’s being deployed. He noted more than 20 cloud-services giants — from Alibaba to Yelp, and Amazon to Twitter — that generate vast amounts of data in their hyperscale data centers and use NVIDIA GPUs for tasks such as photo processing, speech recognition and photo classification. Here is a summary of all the NVIDIA announcements mentioned:

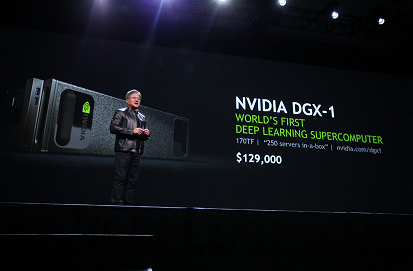

- The world’s first deep-learning supercomputer in a box — a single integrated system with the computing throughput of 250 servers. The NVIDIA DGX-1, with 170 teraflops of half precision performance, can speed up training times by over 12x. An important note is that the new Google Tensorflow runs on the new DGX-1 in an effort to democratize deep learning.

- Underpinning DGX-1 is a revolutionary new processor, the NVIDIA Tesla P100 GPU — the most advanced accelerator ever built, and the first to be based on the company’s 11th generation Pascal architecture. Based on five breakthrough technologies which the company called “miracles” — the Tesla P100 enables a new class of servers that can deliver the performance of hundreds of CPU server nodes.

- Major new software updates in 7 key areas: cuDNN 5 GPU-accelerated library of primitives for deep neural networks, CUDA 8 the latest version of company’s parallel computing platform, HD mapping solution for self-driving cars, NVIDIA Iray photorealistic rendering solution for VR applications, NVIDIA GPU Inference Engine (GIE) high-performance neural network inference solution for application deployment, new technologies for NVIDIA GameWorks, and new features for VRWorks suite of APIs, sample code and libraries for VR developers.

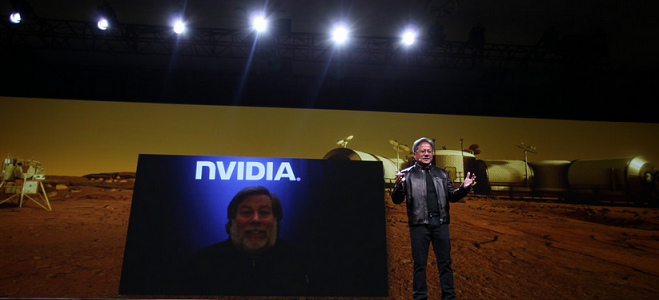

- On the fun side was the new The Mars 2030 VR experience developed with FUSION Media, with advice from NASA, that was demoed by personal computing pioneer Steve Wozniak (who wants to be the first human to go on a one-way mission to the red planet).

- ROBORACER – the World’s First Autonomous Car Race.

In summary, I had a blast at my first GTC. The only downside was that I wasn’t on-site long enough to totally absorb everything, certainly not even a fraction of all the great talks on Deep Learning and AI. But no worries, I treated my attendance as a learning experience and I fully intend to drill down on many areas of interest after-the-fact (starting with this field report). As I sat in the conference press room watching the frenetic activity of the attendees passing by, I anticipated hours of fun digesting all that I saw. Look for many future articles here on insideBIGDATA that cover GPU technology, NVIDIA, the vendors I met, as well as leading-edge research taking place in this space. I’m excited, and I hope you are too!

Contributed by: Daniel D. Gutierrez, Managing Editor of insideBIGDATA. He is also a practicing data scientist through his consultancy AMULET Analytics.

Contributed by: Daniel D. Gutierrez, Managing Editor of insideBIGDATA. He is also a practicing data scientist through his consultancy AMULET Analytics.

Sign up for the free insideBIGDATA newsletter.

Speak Your Mind