This is the fifth entry in an insideBIGDATA series that explores the intelligent use of big data on an industrial scale. This series, compiled in a complete Guide, also covers the exponential growth of data and the changing data landscape, as well realizing a scalable data lake. The fifth entry in the series is focused on the HPE Workload and Density Optimized System.

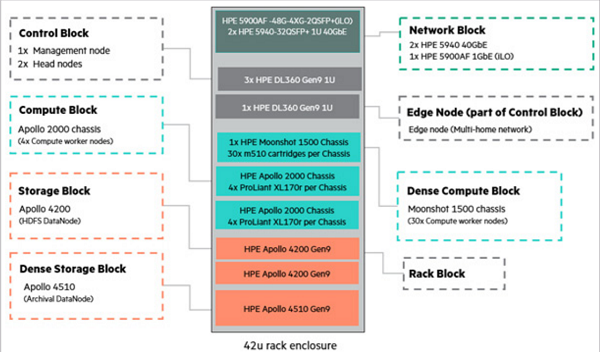

As Hadoop adoption expands in the enterprise, it is common to see clusters running workloads on a variety of technologies and Hadoop distributions in both development and production environments, leading to issues with data duplication and cluster sprawl. The HPE Workload and Density Optimized (HPE WOD) system allows for consolidation of data and isolated workload clusters on a shared and centrally managed platform, providing organizations the flexibility to scale compute and storage as required, and using modular building blocks for maximum performance and density. HPE WDO building blocks and accelerators provide the flexibility to optimize each workload and access a central pool of data for batch, interactive, and real-time analytics.

The HPE Workload and Density Optimized System provides maximum elasticity for organizations to build and scale their infrastructure in alignment with the requirements of existing analytics technologies.

HPE WDO building blocks and accelerators provide the flexibility to optimize each workload and access a central pool of data for batch, interactive, and real-time analytics.

For example, some pilot environments might deploy a smaller number of symmetric configured storage-density optimized servers for data staging and basic MapReduce workloads. Highlatency compute nodes can then be added (repurposing existing symmetric nodes to storage nodes through YARN labels), effectively migrating to an asymmetric architecture, without adding additional storage. A low-latency tier of density optimized accelerator blocks with SSDs or NVMe flash can then be added to address the requirements of time series analysis of large datasets in real-time.

By adding accelerator nodes and adjusting compute-to-storage-node ratio by the block, organizations have the ability to tune their cluster toward business initiatives at the rack level. This architecture maximizes modern infrastructure density, agility, efficiency, and performance while minimizing time-to-value, operating costs, and datacenter real estate.

As modern workloads demand evolving levels of storage and compute capacity, the HPE WDO architecture provides density optimized building blocks to target latency, capacity, and performance concerns.

Over the next few weeks, this series on the use of big data on an industrial scale will cover the following additional topics:

- The Exponential Growth of Data

- The Changing Data Landscape

- Realizing a Scalable Data Lake

- The HPE Elastic Platform for Big Data Analytics

- The Five Blocks of the HPE WDO Solution

You can also download the complete report, “insideBIGDATA Guide to the Intelligent Use of Big Data on an Industrial Scale,” courtesy of Hewlett Packard Enterprise.

Speak Your Mind