ODPi, a nonprofit organization accelerating the open ecosystem of big data solutions, announced the availability of ODPi 2.0, which includes the first release of the ODPi Operations Specification and the Runtime Specification 2.0, to standardize the development model for big data solution and application providers and help enterprises improve installation and management of Hadoop-based applications.

ODPi Publishes Operations Specification Providing Developers Consistency Across Application Management Tools

MapR Event Recap: It’s All About Digital Transformation

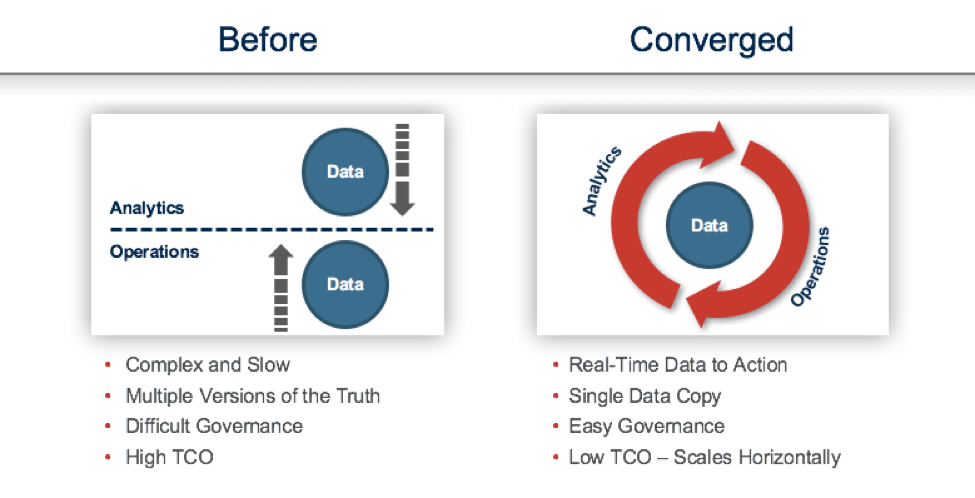

Last week saw two compelling local big data events here in So Cal, both sponsored by MapR. I thought I’d provide a short recap of the events for those who were unable to attend. I was on a panel for the first event, “Digital Transformation in Big Data” and the discussion revolved around MapR’s unique vision for the “3 Keys to Digital Transformation.” For a detailed discussion, these points are well described in a recent blog post.

New Business Intelligence Performance Benchmark Reveals Strong Innovation Amongst Open-Source projects

AtScale, the company providing business users with speed, security and simplicity for BI on Hadoop, released the results of its reference performance study: The Business Intelligence Benchmark for SQL-on-Hadoop engines.

3 Reasons In-Cluster Analytics is a Big Deal

In this special technology white paper, 3 Reasons In-Cluster Analytics is a Big Deal, you’ll learn about how recent technology advances within the Apache Hadoop ecosystem have provided a big boost to Hadoop’s viability as an analytics environment—above and beyond just being a good place to store data.

Distributed System Architectures for Healthcare and Life Sciences

The insideBIGDATA Guide to Healthcare & Life Sciences is a useful new resource directed toward enterprise thought leaders who wish to gain strategic insights into this exciting new area of technology. This segment focuses on the use of distributed system architectures – Hadoop and Spark.

Looker for Hadoop – Interactive Analytics, Visualizations and Data Modeling on large Hadoop Data Sets

In this special technology white paper, Looker for Hadoop – Interactive Analytics, Visualizations and Data Modeling on large Hadoop Data Sets, you’ll learn how the vision of Hadoop as more than a data store is finally a reality.

Cask Releases Preview of First Unified Integration Platform for Big Data

Cask, the company that makes building and running big data solutions easy, announced a public preview release of CDAP 4, the first unified integration platform for big data.

Independent Study Confirms RedPoint Global Delivers Optimum Precision for Big Data Management

RedPoint Global, a leading provider of data management and customer engagement technology, announced findings from a new benchmark study conducted by information management leader MCG Global Services, which revealed leading performance from RedPoint Global’s Data Management™ application.

Cloudera’s Analytic Database Enables Unrivaled Elastic Scale, Agility, and Performance for BI and Analytics in the Cloud

Cloudera, the provider of the secure data management and analytics platform built on Apache Hadoop and the latest open source technologies, released benchmark results that validate Cloudera’s modern analytic database solution, powered by Apache Impala (incubating), not only delivers unprecedented capabilities for cloud-native workloads but does so at better cost performance compared to alternatives.

Informatica Delivers Out-of-the-Box Data Lake Management Solution

Informatica®, the provider of data management solutions, offers business and IT a comprehensive, self-service approach that dramatically shortens the time to value with cloud ready data lakes out-of-the-box.