I recently caught up with Dr. Michael Ernst, Director of the RHIC and ATLAS Computing Facility at Brookhaven National Laboratory, to discuss how Brookhaven National Laboratory has found an innovative and inexpensive way to use AWS cloud spot instances when working with CERN’s LHC ATLAS experiment in order to speed up research during critical time periods. Dr. Ernst is currently a staff member of the Physics Department and the director of the RHIC and ATLAS Computing Facility (RACF) at Brookhaven National Laboratory. In his career of 35 years he has made major contribution to the evolution of computing services in high energy and nuclear physics. Particular areas include coordination of international networking in Europe, and designing and implementing distributed, grid-enabled storage solutions that became foundational elements in LHC computing He has worked at Deutsches Elektronen Synchrotron (DESY) in Germany, CERN in Switzerland, and Fermi National Accelerator Laboratory (Fermilab) near Chicago. He holds a Ph.D. in computational science from Technical University Berlin.

I recently caught up with Dr. Michael Ernst, Director of the RHIC and ATLAS Computing Facility at Brookhaven National Laboratory, to discuss how Brookhaven National Laboratory has found an innovative and inexpensive way to use AWS cloud spot instances when working with CERN’s LHC ATLAS experiment in order to speed up research during critical time periods. Dr. Ernst is currently a staff member of the Physics Department and the director of the RHIC and ATLAS Computing Facility (RACF) at Brookhaven National Laboratory. In his career of 35 years he has made major contribution to the evolution of computing services in high energy and nuclear physics. Particular areas include coordination of international networking in Europe, and designing and implementing distributed, grid-enabled storage solutions that became foundational elements in LHC computing He has worked at Deutsches Elektronen Synchrotron (DESY) in Germany, CERN in Switzerland, and Fermi National Accelerator Laboratory (Fermilab) near Chicago. He holds a Ph.D. in computational science from Technical University Berlin.

Daniel D. Gutierrez – Managing Editor, insideBIGDATA

insideBIGDATA: Can you describe the LHC ATLAS project? What are you trying to accomplish?

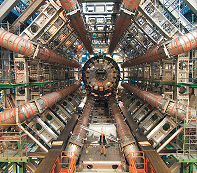

Dr. Michael Ernst: The Large Hadron Collider, based at the l’Organisation européenne pour la recherche nucléaire (CERN) is one of the largest international scientific endeavors ever formed and seeks to unlock mysteries of the universe hidden in the depths of particle physics. ATLAS is one of seven main detectors used at CERN to detect the fleeting particle shrapnel formed when high-energy particles collide at near the speed of light. The work we are pursuing is at the very edge of human understanding and is allowing us to explore the universe and test hypotheses in a truly revolutionary way. For example, in 2012, our work helped lead to the discovery and confirmation of the Higgs Boson, a particle predicted by the Standard Model, but never before detected.

In the future, the LHC and ATLAS detector may help us uncover secrets that remain veiled to us including the posited existence of extra dimensions, the mystery of dark matter, and why in the early days of the universe matter reigned over anti-matter. In many ways, this is Hubble Telescope of the particle physics world – a tool that is allowing us to peer deeper than ever before into the mysteries of our universe and revolutionize the way we conduct science and research.

insideBIGDATA: What is the scope of the project in terms of collaborators, data collection, etc.

Dr. Michael Ernst: The ATLAS Experiment at the LHC has represented the extreme limit of data intensive scientific computing since its physics program began in 2010. In its first running period to spring 2013, ATLAS accumulated a data set of about 150 petabytes (PB), its processing consuming about 4M CPUhours/day at about 140 computing centers around the world, processing over an exabyte of data per year. This puts us in the reach of some of the biggest data users on the planet like Facebook and Google.

Data from the ATLAS detector creates a massive data flow. When running, ATLAS has a raw data rate of around 1PB/s, which is reduced by filters to 800MB/s for relevant real-time research. In all, we generate and store roughly 30PB/year of data for our research purposes. That data then needs to be copied and shared so that it can be used by more than 3000 scientists and collaborators around the globe.

insideBIGDATA: What is Brookhaven’s role in the ATLAS project?

Dr. Michael Ernst: Brookhaven National Laboratory is the lead facility in the United States’ collaboration on the LHC ATLAS project. Its role is to help provide the necessary computing power for research on ATLAS data. We are also one of 11 Tier 1 data centers around the globe that function as the primary storage and computing facilities for the ATLAS project.

insideBIGDATA: What are the primary computing challenges associated with the ATLAS project?

Dr. Michael Ernst: At Brookhaven, we have the ability to spool up about 50,000 computing cores for research. However, adding capacity beyond that has traditionally been difficult in times of peak demand and it is difficult to keep up the 99 percent necessary availability 365 days/year. We looked for solutions that would allow us to quickly and efficiently spool up additional computing cores at a low cost for periods of peak demand.

The computing-limited science of ATLAS has driven the experiment to ambitiously extend its computing to new resources including cost-effective commercial clouds.

AWS Spot Instances seemed to fill that gap, however, there was another challenge to overcome.

The nature of Spot Instances is that they are sold to the highest bidder. That meant that, ostensibly, spot instances could be canceled mid-compute.

Therefore using such resources fully and efficiently demands rethinking the processing workflow, which led an ATLAS team to develop the ATLAS Event Service, which supports agile, fine-grained workflows that can flexibly shape themselves to available resources, depositing their outputs in fine-grained object stores like S3 in near real time.

Another important consideration is, for using the cloud to be a viable option in a worldwide distributed computing environment, cloud resources needed to seamlessly be integrated with the existing Energy Sciences Network (ESnet), Department of Energy’s dedicated science network, to provide global connectivity and to facilitate petascale data transfers at a throughput of several gigabytes per second.

We ran a pilot program to test the reliability and efficacy of the AWS Spot Instances in September 2015. We successfully integrated the cloud with the ESnet and saw that less than 1 percent of instances were terminated during the test over a five-day period, indicating that AWS Cloud Spot Instances were a viable option for rapid compute resource provisioning in the future.

Dynamically being able to add computing resources allows us to be nimble when we need additional resources; we can provision thousands of compute cores within a few hours when we need them, something that is impossible with traditional infrastructure.

Sign up for the free insideBIGDATA newsletter.

Speak Your Mind