Sponsored Post

Finding efficient ways to compress and decompress data is more important than ever. Compressed data takes up less space and requires less time and network bandwidth to transfer. In cloud service code, efficient compression cuts storage costs. In IoT edge applications, compression improves analytics performance. In mobile and client applications, high-performance compression can improve communication efficiency―providing a better user experience.

However, compression and decompression consume processor resources. And, especially in data-intensive applications, this can negatively affect the overall system performance. So an optimized implementation of compression algorithms plays a crucial role in minimizing the system performance impact. Intel® Integrated Performance Primitives (Intel® IPP) is a library that contains highly optimized functions for various domains, including lossless data compression. Intel® IPP offers developers high-quality, production-ready, low-level building blocks for image processing, signal processing, and data processing (data compression/decompression and cryptography) applications. Intel® IPP is a one-stop shop for programming tools/library that are highly optimized for a wide range of Intel® architecture (Intel® Quark™, Intel Atom®, Intel® Core™, Intel® Xeon®, and Intel® Xeon Phi™ processors). These ready-to-use, royalty free APIs are used by software developers, integrators, and solution providers to tune their applications and get the best performance.

In this article, we’ll discuss these functions and the latest improvements. We will examine their performance and explain how these functions are optimized to achieve the best performance. We will also explain how applications can decide which compression algorithm to use based on workload characteristics.

Intel® IPP Data Compression Functions

The Intel IPP Data Compression Domain provides optimized implementations of the common data compression algorithms, including the BZIP2, ZLIB, LZO, and a new LZ4 function, which will be available in the Intel IPP 2018 update 1 release. These implementations provide “drop-in” replacements for the original compression code. Moving the original data compression code to an Intel IPP-optimized code is easy.

These data compression algorithms are the basic functions in many applications, so a variety of applications can benefit from Intel IPP. A typical example is Intel IPP ZLIB compression. ZLIB is the fundamental compression method for various file archivers (e.g., gzip*, WinZip*, and PKZIP*), portable network graphics (PNG) libraries, network protocols, and some Java* compression classes. The application simply needs to relink with the Intel IPP ZLIB library. If the application uses ZLIB as a dynamic library, it can be easily switched to a new dynamic ZLIB library built with Intel IPP. In the latter case, relinking the application is not required.

[clickToTweet tweet=”Finding efficient ways to compress and decompress data is more important than ever. Enter the Intel® IPP library. ” quote=”Finding efficient ways to compress and decompress data is more important than ever. Enter the Intel® IPP library. “]

Intel IPP Data Compression Optimization

How does Intel IPP data compression perform the optimization and achieve top performance? Intel IPP uses several different methods to optimize data compression functionality. Data compression algorithms are very challenging for optimization on modern platforms due to strong data dependency (i.e., the behavior of the algorithm depends on the input data). Basically, it means that only a few new CPU instructions can be used to speed up these algorithms. The single instruction, multiple data (SIMD) instructions can be used here in limited cases, mostly pattern searching.

Performance optimization of data compression in Intel IPP takes place in different ways and at different levels:

- Algorithmic optimization – At the highest level, algorithmic optimization provides the greatest benefit. The data compression algorithms are implemented from scratch in Intel IPP to process input data and to generate output in the most effective way.

- Data optimization – Careful planning of internal data layout and size also brings performance benefits. Proper alignment of data makes it possible to save CPU clocks on data reads and writes.

- New CPU instructions – The Intel® Streaming SIMD Extensions 4.2 (Intel® SSE 4.2) architecture introduced several CPU instructions, which can be used in data compression algorithms according to their nature.

- Intel IPP data compression performance – Each Intel IPP function includes multiple code paths, with each path optimized for specific generations of Intel® and compatible processors.

Since data compression is a trade-off between compression performance and compression ratio, there is no “best” compression algorithm for all applications. For example, the BZIP2 algorithm achieves good compression efficiencies but because it is more complex, it requires significantly more CPU time for both compression and decompression.

How can a user’s application decide the compression algorithm? Often, this involves a number of factors:

- Compression performance to meet the application’s requirement

- Compression ratio

- Compression latency, which is especially important in some real-time applications

- Memory footprint to meet some embedded target application requirements

- Conformance with standard compression methods, enabling the data to be decompressed by any standard archive utility

- Other factors as required by the application

Deciding on the compression algorithms for specific applications requires balancing all of these factors, and also considering the workload characteristics and requirements. There is no “best” compression method for all scenarios.

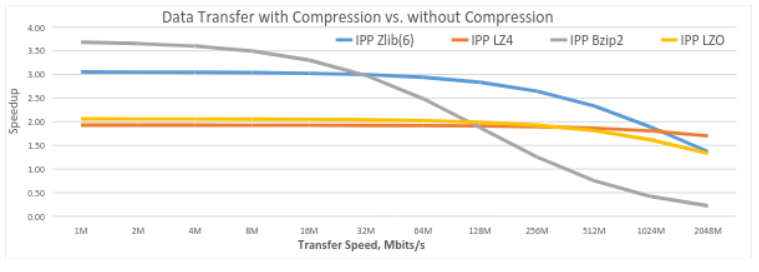

The figure below shows the overall performance of the common Intel IPP compression algorithms at different network speeds. Tests were run on the Intel® Core™ i5-4300U processor. When the code runs on some lower-bandwidth networks (e.g., 1 Mbps), an algorithm that can achieve high compression ratios is important to reduce the data/time for transmission. In our test scenario, Intel IPP BZIP2 achieved the best performance (approximately 2.7x speedup) compared to noncompressed transmission. However, when the data is transferred by a higher-performance network (e.g., 512 Mbps), LZ4 had the best overall performance, since the algorithm is better balanced for compression and transmission time in this scenario.

Data transfer with and without compression

Optimized Data Compression

Data storage and management are becoming high priorities for today’s data centers and edge-connected devices. Intel IPP offers highly optimized, easy-to-use functions for data compression. New CPU instructions and an improved implementation algorithm enable the data compression algorithms to provide significant performance gains for a wide range of applications and architectures. Intel IPP also offers common lossless compression algorithms to serve the needs of different applications.

Speak Your Mind