In this regular column, we’ll bring you all the latest industry news centered around our main topics of focus: big data, data science, machine learning, AI, and deep learning. Our industry is constantly accelerating with new products and services being announced everyday. Fortunately, we’re in close touch with vendors from this vast ecosystem, so we’re in a unique position to inform you about all that’s new and exciting. Our massive industry database is growing all the time so stay tuned for the latest news items describing technology that may make you and your organization more competitive.

Actian Launches Industry-First Real-Time Connected Cloud Data Warehouse Solution

Actian, a leader in hybrid cloud data warehousing and data integration, today announced its Avalanche™ Real-Time Connected Data Warehouse Solution with support for AWS and Azure cloud platforms. The Actian hybrid solution is the industry’s first and only cloud data warehouse to offer complete integration capabilities natively built into the product. It enables enterprises to rapidly and inexpensively harness their diverse data sources for use in high performance analytics, both in the cloud and on-premise.

Actian’s Avalanche Real-Time Connected Data Warehouse was designed for these unprecedented times of business uncertainty, during which real-time decision-making is critical for survival and success. The Actian solution delivers a set of distinctive capabilities that address unique business needs which go beyond a traditional cloud data warehouse such as blazing fast analytics, real-time data ingestion and a true hybrid architecture that can run in the cloud and on-premise. The result is rapid, impactful decision making coupled with substantial near-term cost savings.

“Actian realizes that budgets are constrained in today’s unprecedented business environment. We are so confident in our solution’s ability to bring game changing value to our customers, that we are offering our guarantee that it will cut Snowflake customers’ aggregate costs in half,” said Vikas Mathur, GM of Actian’s Avalanche data warehouse business. “We want to encourage people to try Avalanche and experience the promise of high-performance hybrid analytics coupled with low, predictable pricing firsthand.”

WekaIO Introduces Weka AI to Enable Accelerated Edge to Core to Cloud Data Pipelines

WekaIO™ (Weka), a leader in high-performance and scalable file storage, and an NVIDIA Partner Network Solution Advisor, introduced Weka AI™, a transformative storage solution framework underpinned by the Weka File System (WekaFS™) that enables accelerated edge-to-core-to-cloud data pipelines. Weka AI is a framework of customizable reference architectures (RAs) and software development kits (SDKs) with leading technology alliances like NVIDIA, Mellanox, and others in the Weka Innovation Network (WIN)™. Weka AI enables chief data officers, data scientists and data engineers to accelerate genomics, medical imaging, the financial services industry (FSI), and advanced driver-assistance systems (ADAS) deep learning (DL) pipelines. In addition, Weka AI easily scales from entry to large integrated solutions provided through VARs and channel partners.

Artificial Intelligence (AI) data pipelines are inherently different from traditional file-based IO applications. Each stage within AI data pipelines has distinct storage IO requirements: massive bandwidth for ingest and training; mixed read/write handling for extract, transform, load (ETL); ultra-low latency for inference; and a single namespace for entire data pipeline visibility. Furthermore, AI at the edge is driving the need for edge-to-core-to-cloud data pipelines. Hence, the ideal solution must meet all these varied requirements and deliver timely insights at scale. Traditional solutions lack these capabilities and often fall short in meeting performance and shareability across personas and data mobility requirements. Today’s industries demand solutions that overcome these limitations for AI data pipelines. The solutions must provide data management that delivers operational agility, governance, and actionable intelligence by breaking silos.

Weka AI is architected to deliver production-ready solutions and accelerate DataOps by solving the storage challenges common with IO-intensive workloads such as AI. It helps accelerate the AI data pipeline, delivering more than 73 GB/sec of bandwidth to a single GPU client. In addition, it delivers operational agility with versioning, explainability, and reproducibility and provides governance and compliance with in-line encryption and data protection. Engineered solutions with partners in the WIN program ensure that Weka AI will provide data collection, workspace and deep neural network (DNN) training, simulation, inference, and lifecycle management for the entire data pipeline.

“GPUDirect Storage eliminates IO bottlenecks and dramatically reduces latency, delivering full bandwidth to data-hungry applications,” said Liran Zvibel, CEO and Co-Founder, WekaIO. “By supporting GPUDirect Storage in its implementations, Weka AI continues to deliver on its promise of highest performance at any scale for the most data-intensive applications.”

QB Launches First Options on Futures Execution Algorithm, “Striker”

Quantitative Brokers (QB), an independent provider of advanced execution algorithms and data-driven analytics for futures and U.S. Cash Treasury markets, today announced the launch of a first-ever, intelligent algorithm for options on futures markets. Striker joins QB’s suite of award-winning best execution algorithms: Bolt, Strobe, Legger, Closer, Octane and The Roll.

Striker is the first dynamic agency algorithm for options on futures markets that incorporates both realtime cointegration and implied pricing calculations to determine fair value. The strategy also employs QB’s industry-leading, dynamic passive and aggressive child order placement logic. Striker transactions are also seamlessly displayed in QB’s complementary Transaction Cost Analysis (TCA) platform, another industry first.

“In an unprecedented time, with the CME floor closed due to the current global crisis with COVID19,” said Christian Hauff, Co-Founder and CEO of QB. “We are thrilled to bring this much needed solution to the market which has been seeking an intelligent and purpose built agency algorithm for the options on futures market. We look forward to continuing to fill this need on global exchanges as QB continues to expand.”

Arm Treasure Data launches new CDP capabilities and unlocks value for customers such as Linden Lab and Anheuser-Busch InBev

Arm® Treasure Data™ introduced new product capabilities for its Customer Data Platform (CDP) that better empower global companies, such as Linden Lab® and Anheuser-Busch InBev (AB InBev), to navigate the increasing complexity of customer acquisition. Included are a Customer Journey Orchestration early access program to help companies deliver relevant and engaging brand experiences, 20 new connectors for streamlined integration and expanded worldwide Professional Services including new security and administration features.

“Longer and complex customer buying journeys are driving higher acquisition and retention costs,” said Rob Parrish, senior director of product management, Arm Treasure Data. “The combination of our CDP capabilities for unifying data and journey orchestration tools enable companies to better manage customer touchpoints across the entire buying lifecycle through a single platform.”

OnCrawl injects Data Science into its SEO platform to help companies predict their online performance

OnCrawl just released a new platform called OnCrawl Labs entirely based on Data Science and Machine Learning. Its goal is to allow companies to predict their online performance with robust data and gain a competitive advantage. Relying on Google Colab and using the languages Python and R, OnCrawl Labs offers a portfolio of machine learning algorithms to address strategic SEO issues and offer features not yet available on the SEO market.

Ascend.io and Looker Unify ETL Across Data Lakes, Warehouses, and Pipelines

Ascend.io, the data engineering company, announced the availability of a native integration between the Ascend and Looker platforms. This collaboration closes the enormous gap between enterprise data engineering and data analysis platforms, unlocking access for the first time to live data pipeline sources for their business intelligence practices.

Until now, upstream data sources and systems have been the domain of ETL and data engineering, siloed in software development teams away from the more established business analytics teams that work with Looker and other SQL-based business intelligence tools. As a result, business analytics teams lost time and productivity waiting for the data they needed, while data engineering teams faced an ever-growing backlog of data requests that was impossible to keep up with.

“Analysts across the enterprise increasingly need to harness the business value of data found beyond data warehouses,” said Shohei Narron, technology partner manager at Looker, which joined with Google Cloud in February of 2020. “Ascend brings an unprecedented capability to the Looker ecosystem with which BI teams and analysts can self-serve live data directly from data lakes and pipelines in their SQL statement. As a result, Looker visualizations, LookML models, and Looker-based APIs can harness data pipelines with no further ETL synchronization required.”

Starburst Announces Major Software Release Boosting Presto Security & Performance

Starburst, the Presto company, announced core features in the Q1 release of Starburst Enterprise Presto, as well as Presto version 332, that significantly bolster Presto performance, security, and value for the data science community.

Presto was created in 2012 at Facebook by David Phillips, Martin Traverso, and Dain Sundstrom, and open-sourced in 2013. Today, thousands of companies across the globe use Presto to provide fast & interactive query performance while effectively scaling costs on big data infrastructure. Starburst was founded in 2017 to offer enterprise-grade Presto to the masses, delivering security, performance, and support at scale. Starburst employs the creators and leading committers of the open source project.

This major release combines features that have been contributed back to the open source project as well as curated for Starburst Enterprise Presto customers.

“It’s essential that we keep pushing the envelope on the speed of data access while expanding the security features our customers need,” said Matt Fuller, Starburst Co-Founder and VP, Product. “This release is heavily driven by customer demand for faster data access, while controlling who has access to what data, across every data source.”

Atrium Launches ‘Jumpstart’ Offering for Delivering Einstein Predictions to Tableau Customers

Atrium, a next-generation consulting company that leads enterprises through business transformation with AI and analytics, announced a packaged service offering focused on helping deliver machine learning predictions to Tableau customers.

The service offering is a starter program that helps companies explore how to build predictive models in Einstein Discovery and surface those predictions within Tableau. As a part of the Jumpstart, Atrium will help customers to orchestrate external customer data sources scored by Einstein Predictions, along with managing authentication and data protocols required to deliver those predictions into Tableau. The goal is to provide Tableau customers with a convenient and affordable way to get started in their AI/ML journey.

“Our customers are looking to evolve their Tableau deployments through investments in machine learning and predictive capabilities to provide deeper and more actionable insights into their data,” said Jonathan Lincheck, Senior Vice President, Solution Engineering at Tableau. “The combination of Tableau and Einstein Discovery makes predictions easily accessible and will help extend the power of machine learning to more customers.”

DataStax Enterprise 6.8 Advances Cloud-Native Data and Bare-Metal Performance

DataStax announced the general availability of DataStax Enterprise (DSE) 6.8. DSE 6.8 adds new capabilities for enterprises to advance bare-metal performance, support more workloads, and enhance developer and operator experiences with Kubernetes.

Built on the foundation of Apache Cassandra™, DSE is the scale-out data infrastructure for enterprises that need to handle any workload on-premises and in any cloud on a continuously available, active-everywhere data platform.

“DataStax Enterprise 6.8 has made significant advancements in performance, ops management, and Cassandra workloads, but most importantly it adds a Kubernetes operator. This will help enterprises succeed with mission-critical, cloud-native deployments irrespective of the scale, infrastructure, or data model requirements,” said Ed Anuff, Chief Product Officer at DataStax. “DataStax Enterprise has been hardened by hundreds of enterprises over the last decade and 6.8 will prove why it continues to be the scale-out NoSQL database of choice.”

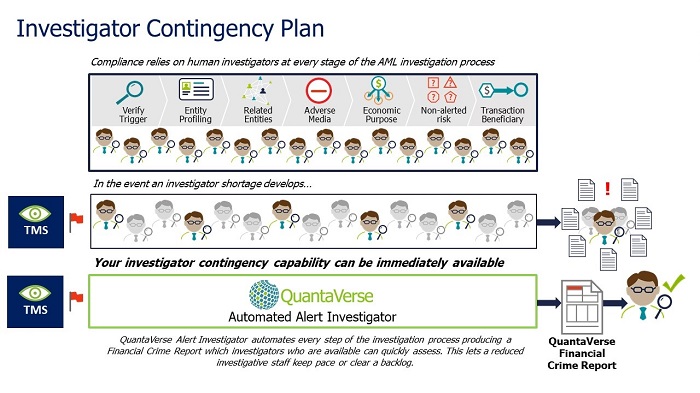

QuantaVerse Expands Capabilities of AI-Powered Financial Crime Platform with New Enhancements

QuantaVerse, the company offering an end-to-end financial crime platform that uses AI and machine learning to automate the majority of the AML investigation process, has announced new enhancements to its AI Financial Crime Platform. These capabilities were delivered to help QuantaVerse customers reduce overall AML compliance costs by automating time-consuming investigative tasks and finding criminality faster and more accurately.

“We are continually innovating and rolling out new features and improvements to meet the needs of our customers,” explained David McLaughlin, CEO and Founder of QuantaVerse. “Specifically, these new capabilities reinforce our commitment to helping customers significantly cut AML costs while remaining effective in identifying financial crime risk.”

Otonomo Expands Its Ecosystem for Connected Car Data Utilization

Otonomo, a leading automotive data services platform, announced that it is expanding the capabilities of its platform to increase the utilization of crowd data. In addition to supporting personal services for BMW and MINI connected vehicles across the globe, Otonomo will also make crowd data available for innovative new use cases that reduce city congestion and improve the driving experience. Crowd data is now available from connected BMW and MINI vehicles located in more than 44 countries, along with data from other automotive manufacturers. The Otonomo Automotive Data Services Platform increases the value of this data by reshaping it and enriching it so application and service providers can more quickly utilize it in their applications.

“Otonomo has long focused on building an ecosystem around car data that is open to many parties and inclusive for many use cases,” said Ben Volkow, Chief Executive Office and Founder of Otonomo. “We’re excited to be able to expand this ecosystem with crowd data from BMW and MINI vehicles.”

Pepperdata Introduces New Kafka Monitoring Capabilities for Mission-Critical Streaming Applications

Pepperdata, a leader in Analytics Stack Performance (ASP), announced the availability of Streaming Spotlight, a new product in Pepperdata’s data analytics performance suite. The suite is purpose-built for IT operations teams, giving them a single, comprehensive view of their analytics stack, both in the cloud and on premises. With Streaming Spotlight, existing customers can integrate Kafka monitoring metrics into the Pepperdata dashboard, adding detailed visibility into Kafka cluster metrics, broker health, topics and partitions.

Kafka is a distributed event streaming platform and acts as the central hub for an integrated set of messaging systems. Kafka’s architecture of brokers, topics and data replication supports high availability, high-throughput and publish-subscribe environments. For some users, Kafka handles trillions of messages per day. Managing these data pipelines and systems is complex and requires deep insight to ensure these systems run at optimal efficiency.

“With this new functionality, IT teams have the visibility needed to run their streaming applications as efficiently as possible. The ability to be alerted to faults, and then pinpoint those issues in near real time for remediation is no longer a luxury in mission-critical environments,” said Ash Munshi, CEO, Pepperdata. “We’re providing an elegant solution for observability and monitoring as Kafka is becoming the de-facto standard for streaming applications.”

Qlik Makes It Easier for Every Customer to Benefit from Analytics in the Cloud

Qlik announced new packaging and adoption programs, giving customers more choice and making it simpler, easier and more cost-effective to adopt analytics in the cloud. The new programs include new packaging of Qlik Sense Enterprise with SaaS only and Client-Managed options, plus a direct path for QlikView customers to adopt Qlik Sense Enterprise SaaS with the ability to host their QlikView documents in the cloud.

“Customers are eager to leverage the scale and cost efficiencies of analytics in the cloud, and at the same time leverage augmented and actionable analytics to turn insights into action,” said James Fisher, Chief Product Officer of Qlik. “With our latest Qlik Sense offering and our new Analytics Modernization Program, aimed directly at helping QlikView customers’ journey to the cloud, it’s easier than ever for every Qlik customer to adopt and leverage cloud-based analytics and benefit from new AI and cognitive technologies across their entire organization.”

ADLINK Joins Intel and Arrow Electronics to Launch Vizi-AI™ Development Starter Kit for Industrial Machine Vision AI at the Edge

ADLINK Technology, a global leader in edge computing, has launched Vizi-AI™ with Intel providing a development starter kit (devkit) for industrial machine vision artificial intelligence (AI).

The Vizi-AI starter devkit includes an Intel Atom® based SMARC computer module with Intel® Distribution of OpenVINO™ toolkit and ADLINK Edge™ software. The dekvit is now available exclusively through Arrow Electronics in the North America and EMEA regions. ADLINK previewed Vizi-AI at Embedded World in February where it was awarded ‘Best in Show’ for AI and machine learning by Embedded Computing Design.

Developers can easily connect Vizi-AI to different image capture devices and then deploy and improve machine learning models to harness insight from vision data to optimize operational decision-making. Vizi-AI includes a range of pre-built OpenVINO compatible machine learning models that can be used straight out of the box.

“ADLINK is working closely with Intel to apply artificial intelligence to edge computing and our new Vizi-AI devkit releases the potential for developers to deploy vision-based AI applications faster and easier than ever so our industrial customers can optimize operational efficiency and drive business value,” said Steve Cammish, VP Edge Solutions at ADLINK. “We have designed the Vizi-AI devkit as an integrated hardware and software solution which provides users with an ideal starting point to find business value from machine vision AI by enabling easy edge deployment of their machine learning models. This approach can then be scaled for industrial requirements using the same software but deployed on more powerful hardware as needed. This gives our customers the ultimate future-proofed flexibility, knowing at deployment time they can make their hardware solution choice, but in development they can start on our low-cost Edge AI Development kit.”

New Relic Enhances AIOps Capabilities

New Relic, Inc. (NYSE: NEWR), the comprehensive cloud-based observability platform built to help customers create more perfect software, enhanced New Relic AI, a suite of AIOps capabilities solution built for on-call DevOps, Site Reliability Engineering (SRE) and network operations center (NOC) teams responsible for operating modern infrastructure. New Relic AI provides advanced applied intelligence (AI) and machine learning (ML) technologies to help customers detect, diagnose and resolve incidents faster, and continuously improve incident management workflow.

DevOps and SRE teams are under increased pressure to meet service level objectives, ship software without errors, and quickly fix incidents before their customers notice. More and more frequently, teams now find themselves bombarded with alerts sent from a mix of fragmented tools making it even more difficult to detect, diagnose, and resolve potential problems. New Relic AI was designed to provide on-call teams with intelligence and automation that augments their existing incident management teams and workflows to help get closer to root cause faster.

“New Relic’s goal is to help reduce the toil and anxiety of running modern systems for engineering teams. We’re proud to report that our early-access customers reported that they have seen automatic reductions in alert noise by 50 percent — and some as much as 80 percent within days,” said Guy Fighel, GVP and Product GM at New Relic. “New Relic AI is the only solution that has the automation, intelligence and scale-out architecture needed to deliver true observability and offer precise insights that today’s modern and complex enterprises require. We continue to push the boundaries to empower DevOps and SRE teams as we enhance our platform relentlessly.”

SISENSE RELEASES NEW AI NATURAL LANGUAGE CAPABILITIES TO IMPROVE DATA DRIVEN DECISION MAKING

Sisense, a leading analytics platform for builders, announced its latest quarterly release which empowers users with deep, actionable insights from their complex data, no matter where it is located. The Q1 ‘20 quarterly release includes a focus on AI throughout the platform, the Sisense patent-pending Knowledge Graph based on hundreds of billions of past analytics events, and the robust and flexible tools that developers have come to expect from Sisense. Sisense simplifies digital transformation and empowers everyone inside and outside an organization to make data-driven decisions while balancing the needs of end-users and technical teams.

Recent developments in Natural Language Processing (NLP) have been heralded as being “revolutionary” for driving better search engines, smarter chatbots, and digital assistants. One type of Natural Language Query offers a Google-like interface. NLP is expected to boost the adoption of analytics and business intelligence significantly given that understanding data will become much easier. Sisense has been innovating in this area since its integration with Amazon Alexa in 2016 and continues to invest in it.

Sisense Natural Language Query (NLQ) enables non-technical users to explore their data and gain deeper insights, even if they are not familiar with the data structure. Users begin by simply typing a question (in plain english) and immediately receive suggestions and personalized recommendations based on past searches and organizational usage patterns, along with full support for spelling mistakes, synonyms and ambiguous terms. Sisense NLQ delivers greater analytical agility, deeper data explorations, and shorter times-to-insight. Is available today for early adopters and for the general population in May.

“NLQ is the promise of democratizing data for users inside and outside companies; and our Embedded Playground is an industry-first, open resource that allows builders to experiment and demonstrate the value of new types of analytics capabilities to their stakeholders,” said Guy Boyangu, CTO and co-founder of Sisense. “Early response to this product release is extremely positive and we believe it will not only accelerate the success of our customers and partners, but will bring BI and analytics to the forefront of every C-level executive as they continue their digital transformation journey.”

How to Train Your Algo: Software Development Firm Art+Logic Launches Vibrary, an Open-Source AI Tool for Audio Pros

In collaboration with Dr. Scott Hawley, Physics Professor at Belmont University, Art+Logic is unveiling Vibrary, the first project to come out of its incubator lab. Vibrary uses machine learning to analyze short samples and loops. Its design makes it easy for producers, composers, and musicians to train their own models and classify sounds by sound, genre, feel or other characteristics, defined by users’ needs and preferences.

The open-source AI tool features a helpful interface to make training algorithms accessible to anyone with a computer, internet connection, and a massive sound library. “Much of the technology involved is straightforward, but what is unique about us is that we built a user-friendly utility that lets people train their own AI,” explains Hawley.

Before Art+Logic embraced the project for its incubator initiative, Hawley had been playing around with algorithms and audio files for years, a hobby, of sorts, of the busy physics professor and musician. He dug into the work of top researchers using spectrograms, the visual representations of sound, as objects for algorithmic classification. It enabled him to find and tag sounds in a massive, confusingly-labelled sample and patch library, something producers and composers often struggle with.

He dubbed his experiments Panotti, named for the big-eared people of medieval legend, and eventually he took his prototypes to Nashville’s ASPIRE Research Co-op, a gathering dedicated to audio innovation. He and his fellow researchers worked to improve Panotti.

There was a problem, however. Hawley’s model gave good results, but it was a pain to set up and train. Hawley imagined a better way, one accessible to audio pros, and Art+Logic helped him find it, creating a simple, attractive interface. This interface ensures Vibrary leaps past a major sticking point for many specialized machine learning projects: Domain experts aren’t data scientists, and data scientists may have no clue how domain experts use or perceive the data. Vibrary empowers audio pros to build their own AI without a data-science background.

“Scott’s interface was built in Python, but it wasn’t something the overwhelming majority of users would have been able to configure,” says Jason Bagley, senior software developer at Art+Logic, himself an electronic musician. “We wanted something someone could download and start running immediately. We simplified things, automating a lot of processes. I came up with a user flowchart for training and categorization. My colleague Daisey Traynham turned the flowchart into an interface that is simple to use, hiding as much of the complexity as possible.”

Nuance Launches Nuance Mix DIY Toolset, Opens Access to Market-Leading Conversational AI

Nuance® Communications, Inc. (NASDAQ: NUAN) announced the launch of Nuance Mix, an open enterprise-grade, software-as-a-service (SaaS) tooling suite for creating advanced conversational experiences that power Virtual Assistants (VA) and IVR using Nuance’s industry-leading Conversational AI.

As global organizations increasingly look to integrate Conversational AI into their digital and voice customer engagements, the ability to build a conversational experience once and deploy it across channels and modalities has become critical. Nuance Mix allows those organizations to build, maintain and deliver the complex enterprise-grade conversational experiences that help get vital transactions resolved.

“Nuance Mix is built on decades of experience in conversational design across voice and chatbot solutions. It provides the scalable and flexible deployment options required to address the multifaceted needs of organizations, including meeting security and compliance demands,” said Joe Petro, EVP and CTO, Nuance. “With Nuance Mix, organizations can use market-tested tools and expert-built models to design, develop, test and maintain their applications.”

Imply 3.3 Extends Performance and Reduces TCO for Real-Time Intelligence

Imply, the real-time intelligence company, announced Imply 3.3, featuring enhancements that help customers optimize their analytics spend and improve time to insight on their freshest data, while extending Imply’s leading real-time analytics performance to a broader set of queries.

Enterprise leaders in a range of industries use Imply to deliver self-service real-time analytics to their business users, make business intelligence interactive and exploratory, and create data-driven applications for their customers. They’ve wanted the ability to query multiple data sets directly using standard SQL operations while not sacrificing performance, and now they can.

Imply 3.3 takes advantage of new SQL JOIN support in Apache Druid 0.18. The addition of JOIN operations broadens Druid’s performance advantage over data warehouses and data lake query engines by leveraging Druid’s architectural advantages such as advanced indexing and horizontal query distribution. Druid’s innate query speed advantage over data lake query engines was demonstrated last year by researchers at the University of Minho (Portugal). Druid displayed a 10X to 59X advantage over Presto and was 110X to 190X faster than Apache Hive.

“Digital transformation has spawned huge amounts of continuously flowing data. Our customers’ challenge is to bring that data to bear on day-to-day decisions, cost-effectively,” said Fangjin Yang, chief executive officer and co-founder of Imply, “Our latest release greatly improves the cloud computing economics of real-time intelligence, while maintaining best-in-class performance, so that companies can extend analytics beyond the analyst, to business users, while maintaining fiscal responsibility.”

Altair Announces Major Update to Panopticon Real-time Data Monitoring and Analysis

Altair, (Nasdaq: ALTR) a global technology company providing solutions in product development, high-performance computing (HPC), and data analytics, announced a major new release of Panopticon, its comprehensive platform for user-driven monitoring and analysis of real-time trading and market data. Panopticon now delivers the speed, flexibility, and scalability of a cloud-based solution for data streaming and visualization, further simplifying the deployment and expansion of user-generated content, dashboards, and applications.

Established as an industry leader that supports electronic trading operations in global banks and with asset managers, the enhanced capabilities of Panopticon are also ideally suited to other sectors where real-time monitoring and analysis of high-volume, high-velocity data streams is equally critical. These include operational data analytics applications in manufacturing, logistics, telecoms, oil and gas production, and energy distribution.

“Our clients are some of the most sophisticated users of data analytics systems in the world, and we’ve been working very closely with them to define the platform’s new architecture and capabilities,” said Sam Mahalingam, Altair CTO. “With Panopticon, our clients can examine their time series data down to the millisecond – or below – as well as monitor any number of real-time streaming feeds in actionable ways. It’s a real-time world, and with Panopticon 2020, we are delivering a ‘single pane of glass’ view into the complete application lifecycle in the most scalable cloud-ready streaming analytics platform on the market.”

OPSANI INTRODUCES FREE CLOUD COST REDUCTION SERVICES FOR CLOUD APPS

Opsani, a leading provider of AI-driven optimization for cloud applications, introduced Project Vital, a new service that offers Opsani’s cloud optimization capability for free for the next three months to help companies lower their cloud bills during the economic slowdown. Opsani works with companies to reduce costs by up to 70 percent through cloud optimization while meeting customer-set performance goals.

Opsani cloud optimization (CO) deploys ML algorithms to optimize cloud applications and infrastructure and uses AI to autonomously adjust runtime configurations so that applications execute most efficiently at varying traffic profiles. Those automatic efficiencies ensure cloud applications deliver the optimal cost and user experience.

“We’ve never experienced such a dramatic and immediate slowdown to our economic engine, and no sector has been spared. We believe technology has a key role to play in mitigating the huge and dynamic changes to our economy,” said Ross Schibler, CEO and Co-founder, Opsani. “We have a tool that provides services providers immediate relief from cloud bills. We want to be part of the recovery solution by offering our services for free to companies that need to immediately cut costs so they can keep people employed.”

Sign up for the free insideBIGDATA newsletter.

Speak Your Mind