In this special guest feature, Vincent Terrasi, Product Director at OnCrawl, discusses what happens when data science and machine learning meets SEO. Vincent became Product Director for OnCrawl after having been Data Marketing Manager at OVH. He is also the co-founder of dataseolabs.com where he offers training about Data Science and SEO. He has a very varied background with 7 years of entrepreneurship for his own sites, then 3 years at M6Web and 3 years at OVH as Data Marketing Manager. He’s a pioneer in Data Science and Machine Learning for SEO.

Data science as a game changer for SEO

Data science crosses paths with both big data and artificial intelligence when it comes to analyzing and processing data known as datasets. The growing field known as data science is a combination of various tools, algorithms, and machine learning rules that are used to discover hidden patterns based on raw data.

Data science isn’t new in SEO: back in 2011, Google created Google Brain, a team dedicated to transforming Google’s products with artificial intelligence to make them “faster, smarter and more useful.” With 95% of searchers using Google as their main search engine, it was no surprise that the Mountain View company invested a lot in new technologies to improve the quality of their services. In 2015, Google Brain rolled out RankBrain, a game-changing algorithm that is used to improve the quality of Google search results. As about 15% of queries have never been searched for before, the purpose was to automatically allow Google to better understand the query in order to deliver the most relevant results.

These examples, among many others, of how Google implements data science in its algorithms show that these two disciplines are complementary. So now let’s take a look at how SEO can take advantage of machine learning.

The value of machine learning for SEO

When it comes to applying machine learning to SEO, particularly with regard to how to save time on a daily basis and how to show the value of SEO to the C-Suite in your organization, three advantages come to mind:

Prediction

Prediction algorithms can be helpful when prioritizing your roadmap by highlighting keywords, identifying future long-trail trends or even predicting traffic. For example, focusing on long-tail keywords is an effective SEO strategy, as they often bring in more traffic together than any top, highly competitive keyword. However, as they depend on such a small number of searches, they can be difficult to predict and plan for. But several reasons can lead the company, marketing directors, and many other decision-makers to ask for SEO traffic projections:

- To be sure of the investment

- To balance expenses between organic and paid channels

Using trained models, Facebook Prophet and Google Search Console data, you can identify and detect future long-trail trends and build a predictive model to forecast Google hits.

Text generation

An efficient SEO strategy requires good content, but content creation is expensive to set up and maintain on a website. Or it can simply be hard to find inspiration.

This is why automatic content generation is valuable. When text generation is highly qualitative, it can be used for:

- Creation of anchors for internal linking

- Mass-creation of variants of title tags

- Mass-creation of variants of meta descriptions

Automation

Automation is helpful to label images and eventually video by using an object detection algorithm like the one on TensorFlow. This algorithm can help label images, so it can optimize alt attributes pretty easily. Also, the automation process can be used for A/B testing as it is pretty simple to make some basic changes on a page.

Automation can also be used to detect anomalies before Google notices them. One purpose of an SEO audit is to find metrics or KPIs where the website does not perform as expected.

Using machine learning to find anomalies revealed by crawls also means that you can take seasonal events into account, along with gradual changes to the website over time.

How to connect technical SEO to machine learning

A practical case study with long-tail keywords

Now, practically, how easy it is to connect technical SEO to machine learning?

Having an access to a data science platform as well as API access to an SEO tool would make your life way easier.

If you don’t have this type of access, I’d like to introduce you to a methodology that you can use to predict long-tail keywords trends. Long-tail keywords are search terms that have a lower search volume and competition rate than short-tail keywords. They often bring in more traffic together than any top, highly competitive keyword, that’s why they are extremely helpful for any SEO strategy.

Objectives & Prerequisites

Our goal is to identify long-tail keywords for your site and to build a predictive model to forecast future long-tail trends and Google hits.

This method, using a Google Collab notebook and the Facebook Prophet algorithm, will allow you to forecast time series data based on an additive model where non-linear trends take into account yearly, weekly, and daily seasonality, as well as effects due to holidays.

For that, you will need:

- This Google Colab notebook

- Google Search Console API

- Facebook Prophet Library

5 steps to get your SEO predictions

I will walk you through 5 easy steps to use data science in order to get long-tail keywords trends prediction for your website.

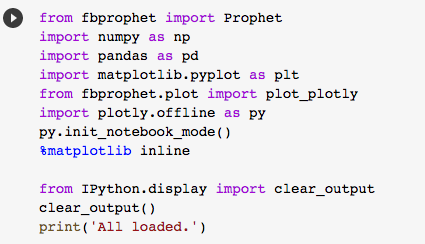

1. Import libraries

Once you’ve opened your Google Colab notebook, you need to import the Facebook Prophet library. Run the script to get these resources.

2. Connect your Google Search Console

To connect your Google Search Console, you need to add your “Client_ID” as well your “Client_Secret”. Once you have filled out this information, you can run the script.

The notebook will now ask you to choose the GSC projects that you want to examine. You can simply choose the website that you want to analyse in the drop-down menu:

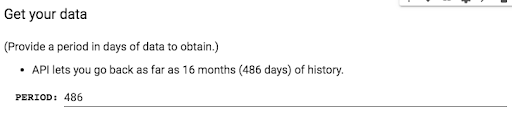

Last step of the GSC API access, you need to provide the period that you’d like to examine. You can go up to 16 months, which is the limit of data available in GSC:

3. Detect your long tail

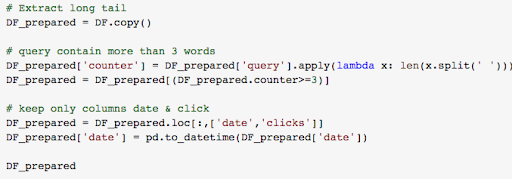

For this example, we will consider any query with more than three words to be a long-tail query:

Run the script to get all your long-tail queries, the number of clicks they get and their date.

4. Get your data ready for Facebook Prophet

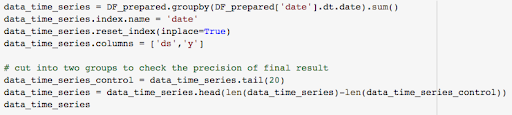

Now that you have all your long-tail keywords data, you need to prepare them for Facebook Prophet:

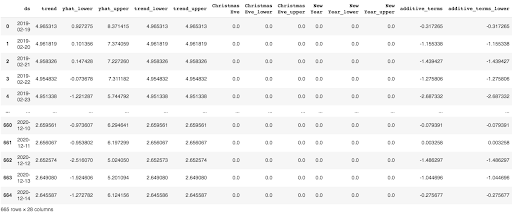

- Finalize the data: sort your data in two columns: a data column (ds) and a value column (y)

- Split the data in two groups: 80% of the data will be used to create the projections and the rest of the data will be used to check the accuracy of the projections.

5. Develop forecasting model and make predictions

To develop your forecasting model, you first need to create an instance of the Prophet class and then add your data:

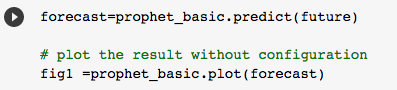

Then, create a dataframe with the dates for which you want a prediction with make_future_dataframe(period=X, “X” being a number of days.

Call predict to make a prediction and store it in the forecast dataframe.

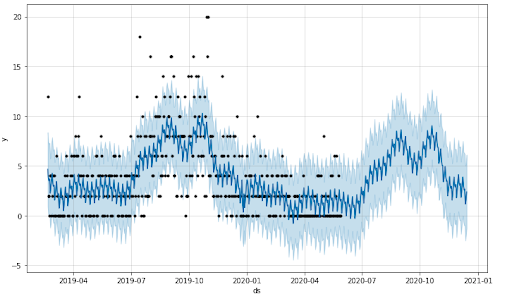

And here we are, you’ve just generated a first prediction graph with your own data!

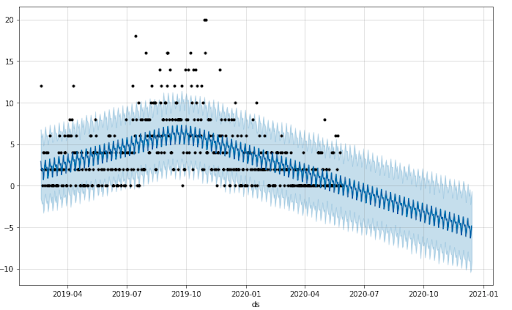

What you need to do now is to set the reality of your website’s life cycle during the analysed period.

Let me explain: Prophet creates 25 changepoints, or key events, influencing the data. But those key events are probably not the ones which correspond to the reality of your website, like Christmas if you have an ecommerce website or the Super Bowl if you have an online media site dedicated to sports.

To set your number of changepoints, use the n_changepoints parameter when initializing Prophet. Prophet will also let you adjust the “range” of these key events, meaning the impact that they will have on the prediction.

Now that you’ve added more changepoints, your graph should look more like this:

You will probably need to fix the number of changepoints and the number of changepoint_prior_scale to get the result that you want. Feel free to play with the scripts to reduce the error rate and choose your scale.

Once you’ve done that, we can now calculate the final projection. Run the script to get your final result and forecast data:

Wrap it up

We have seen in this post a method to leverage your GSC data with Facebook Prophet. With nothing more than the right API access and this Google Colab notebook, you can easily create a reliable forecast of your long-tail keywords and Google hits.

These data can be used directly in reports, but also when setting goals for a marketing campaign or as a benchmark to establish the value of a project.

Once you see how useful this type of analysis can be for SEO, it will be hard to imagine a time when data science and technical SEO didn’t go hand in hand.

Sign up for the free insideBIGDATA newsletter.

Amazing article, I was looking for something like this & truly feel glad to spend time on your blog. I am surely going to share it with my network. Thanks for sharing.