In this contributed article, editorial consultant Jelani Harper suggests that since there are strengths and challenges for each form of AI, prudent organizations will combine these approaches for the most effective results. Certain solutions in this space combine vector databases and applications of LLMs alongside knowledge graph environs, which are ideal for employing Graph Neural Networks and other forms of advanced machine learning.

A Brief Overview of the Strengths and Weaknesses Artificial Intelligence

Scaling Data Quality with Computer Vision on Spatial Data

In this contributed article, editorial consultant Jelani Harper discusses a number of hot topics today: computer vision, data quality, and spatial data. Computer vision is an extremely viable facet of advanced machine learning for the enterprise. Its utility for data quality is evinced from some high profile use cases. This technology can produce similar boons for other facets of the ever-shifting data ecosystem.

Video Highlights: Attention Is All You Need – Paper Explained

In this video presentation, Mohammad Namvarpour presents a comprehensive study on Ashish Vaswani and his coauthors’ renowned paper, “Attention Is All You Need.” This paper is a major turning point in deep learning research. The transformer architecture, which was introduced in this paper, is now used in a variety of state-of-the-art models in natural language processing and beyond. Transformers are the basis of the large language models (LLMs) we’re seeing today.

Anomaly Detection: Its Real-Life Uses and the Latest Advances

In this contributed article, Al Gharakhanian, Machine Learning Development Director, Cognityze, takes a look at anomaly detection in terms of real-life use cases, addressing critical factors, along with the relationship with machine learning and artificial neural networks.

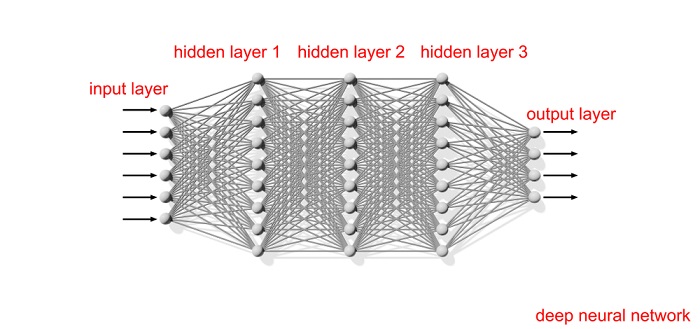

Research Highlights: Deep Neural Networks and Tabular Data: A Survey

In this regular column, we take a look at highlights for important research topics of the day for big data, data science, machine learning, AI and deep learning. It’s important to keep connected with the research arm of the field in order to see where we’re headed. In this edition, we feature a new paper showing that for tabular data, algorithms based on gradient-boosted tree ensembles still outperform the deep learning models. Enjoy!

The Amazing Applications of Graph Neural Networks

In this contributed article, editorial consultant Jelani Harper points out that a generous portion of enterprise data is Euclidian and readily vectorized. However, there’s a wealth of non-Euclidian, multidimensionality data serving as the catalyst for astounding machine learning use cases.

What’s Under the Hood of Neural Networks?

In this contributed article, Pippa Cole, Science Writer at the London Institute for Mathematical Sciences, discusses new research on artificial neural networks that has added to concerns that we don’t have a clue what machine learning algorithms are up to under the hood. She highlights a new study that focuses on two completely different deep-layered machines, and found that in fact they did exactly the same thing, which was a huge surprise. It’s a demonstration of how little we understand about the inner workings of deep-layered neural networks.

Research Highlights: Attention Condensers

A group of AI researchers from DarwinAI and out of the University of Waterloo, announced an important theoretical development in deep learning around “attention condensers.” The paper describing this important advancement is: “TinySpeech: Attention Condensers for Deep Speech Recognition Neural Networks on Edge Devices,” by Alexander Wong, et al. Wong is DarwinAI’s CTO.

Machine Learning Beyond Predefined Recipes

The next evolution in human intelligence is automating the creation of machine learning models to not follow predefined formulas, but rather adapt and evolve according to the problem’s data. While machine learning has enabled massive advancements across industries, it requires significant development and maintenance efforts from data science teams. Enter Darwin, a machine learning tool that automates the building and deployment of models at scale.

An Introduction to Deep Learning and Neural Networks

In this contributed article, Agile SEO technical writer and editor Limor Wainstein outlines how deep learning, neural networks, and machine learning are not interchangeable terms. This article helps to clarify the definitions for you with an introduction to deep learning and neural networks.