As a data science practitioner who uses machine learning as the basis of many client projects, I’m increasingly finding myself on the front line of the so-called “Killer AI” debate that keeps sprouting up around the web and mainstream press. Just recently I engaged in a discussion on Quora where a couple of people considered my dismissal of this “threat to humanity” as a serious oversight for what could become mankind’s downfall. The main argument in support of the Killer AI notion is the handful of very high-profile individuals who believe in it – physicist Stephen Hawking, entrepreneur Elon Musk, as well as commercial software giants Bill Gates and Bill Joy. Notice that NONE of these people, while brilliant in their own fields, have any direct experience with machine learning, AI or robotics. They’re all very smart and most know something about mathematics, but none have the formal understanding of AI, in the form of machine learning and deep learning, that an academic researcher or professional in the field should have.

What the Experts Say

You’re not seeing people deeply involved in the field of AI and machine learning coming out in support of the Killer AI myth. In fact, they’re taking time out of their busy schedules and careers to make public statements to the contrary, trying to persuade the lay public how preposterous this concern is. Here are a few outtakes from AI luminaries that I respect:

Here’s the perspective of Professor Andrew Ng, my old Stanford professor who founded Google’s Google Brain project, and built the famous deep learning net that learned on its own to recognize cat videos, before he left to become Chief Scientist at Chinese search engine company Baidu:

Computers are becoming more intelligent and that’s useful as in self-driving cars or speech recognition systems or search engines. That’s intelligence,” he said. “But sentience and consciousness is not something that most of the people I talk to think we’re on the path to.”

Here’s the perspective of Yann LeCun, Facebook’s director of research, a legend in neural networks and machine learning (‘LeCun nets’ are a type of neural net named after him), and one of the world’s top experts in deep learning.

Some people have asked what would prevent a hypothetical super-intelligent autonomous benevolent A.I. to “reprogram” itself and remove its built-in safeguards against getting rid of humans. Most of these people are not themselves A.I. researchers, or even computer scientists.”

Here’s the perspective of Michael Littman, an AI researcher and computer science professor at Brown University, and former program chair for the Association of the Advancement of Artificial Intelligence:

There are indeed concerns about the near-term future of AI — algorithmic traders crashing the economy, or sensitive power grids overreacting to fluctuations and shutting down electricity for large swaths of the population. […] These worries should play a central role in the development and deployment of new ideas. But dread predictions of computers suddenly waking up and turning on us are simply not realistic.

And lastly, here’s the perspective of Oren Etzioni, a professor of computer science at the University of Washington, and now CEO of the Allen Institute for Artificial Intelligence:

The popular dystopian vision of AI is wrong for one simple reason: it equates intelligence with autonomy. That is, it assumes a smart computer will create its own goals, and have its own will, and will use its faster processing abilities and deep databases to beat humans at their own game. It assumes that with intelligence comes free will, but I believe those two things are entirely different.”

Non-Experts Making Expert Opinions

The way the notion of Killer AI is being perpetuated is due to non-experts making expert opinions. In many cases, smart people from diverse fields are deciding they’re qualified to make assessments on the state of AI technology to warn the world to watch out for what’s coming. A good example of this trend is in a recent article appearing in the venerable scientific journal Nature: “Intelligent robots must uphold human rights,” by Hutan Ashrafian, a lecturer and surgeion at Imperial College. Clearly, this was an opinion piece, but appearing in Nature served to lend it more credibility than it deserved. The piece started off with “There is a strong possibility that in the not-too-distant future, AIs, perhaps in the form of robots, will become capable of sentient thought.” This is an enormous stretch considering what the above expert researchers in the field believe. What gives a medical lecturer the tech street cred necessary to make such a declaration? He supports his premise by bringing up ideas from SciFi – Blade Runner, Ex Machina, and even Asimov’s Three Laws of Robotics. Hello!? This is science fiction, not reality.

Will Deep Learning Strangle You in Your Sleep?

It might be useful at this stage in the public discourse around Killer AI to examine just how sentience could spring in to existence. It happens all the time in SciFi. Remember Mr. Data on Star Trek: The Next Generation, who was a sentient android created by Dr. Soong, along with brother Lore who used an “emotion chip” to become evil. Or there is the sinister HAL 9000 computer in 2001 Space Odyssey that terrorizes crew member Dave Bowman. Or more recently, the “humanics” project featured on the CBS television show Extant featured human-like robots that one day decided to kill their creators and take over the world. Or there’s my all-time favorite Blade Runner where rogue “replicants” would go around killing people. All great stories, but these examples of Killer AI are from SciFi! I’m afraid that many fearing the Killer AI mystique today base their beliefs on these stories from science fiction.

Now let’s talk about what’s real. In the Dec. 12 issue of the Los Angeles Times, an article appeared “Toyota is ramping up robot research.” This summary gives a more reality-based assessment for the state-of-AI with so-called “partner” robots to help the elderly get around, autonomous cars, and Human Support Robots to fetch an item to your bedside and open curtains. This is a hot area of robotics called “contextual awareness.” But all this technology doesn’t sound so ominous because it isn’t. This is the reality of AI.

Also appearing in MIT News on Dec. 10 there was “Computer system passes “visual Turning test: Character-drawing machines can fool human judges.” The article talks about researchers who developed a computer system whose ability to produce a variation of a character in an unfamiliar writing system, on the first try, is indistinguishable from that of humans. This is the state-of-the-art in AI technology – pure infancy.

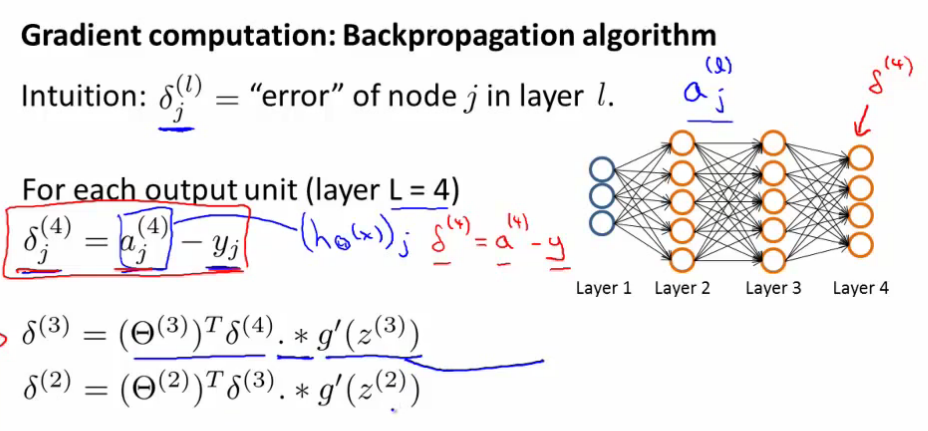

But let’s see how this level of pedestrian AI applications could turn evil. I’ve programmed machine learning solutions for data science projects using the neural network algorithm. I’ve written a back-propagation process that helps the data flow through the neural network in order to make predictions. I’ve stared at lines of code that make all this happen. But I can tell you I cannot conceive of a situation where my lines of code suddenly “jump the tracks” and create their own path in the world. After all, this is what sentience is all about right? The ability to feel, perceive, or experience subjectively. Exactly how would some neural net code that say, drives an autonomous vehicle, suddenly decide it wants to turn into on-coming traffic for the express desire to kill its occupant? Think about it. It would require the impromptu writing of a new, potentially very complex, new “killer algorithm.” Computers don’t come up with plans to kill people, but if you let science fiction drive your belief system, then maybe so.

Machine Learning Experts Need to Step Up

There’s been an increasing amount of misinformation on this subject appearing in the mainstream press. For example, “How Elon Musk and Y Combinator Plan to Stop Computers From Taking Over” appears online on Dec. 11, 2015. The article talks about the formation of a new non-profit venture OpenAI ” all to ensure that the scary prospect of computers surpassing human intelligence may not be the dystopia that some people fear.” In this case, the journalist is using sensationalism to get page views by playing off the Killer AI theme. Instead, OpenAI is more about data privacy and maintaining individual control over your data.

Well, there’s all the science fiction stuff, which I think is years off, like The Terminator or something like that,” said Sam Altman, CEO of Y-Combinator when asked about a bad AI. “I’m not worried about that any time in the short term. One thing that I do think is going to be a challenge — although not what I consider bad AI — is just the massive automation and job elimination that’s going to happen. Another example of bad AI that people talk about are AI-like programs that hack into computers that are far better than any human. That’s already happening today.”

That perspective is a far cry from “stop computers from taking over,” an idea promoted by the journalist. And that’s my point, it is time for AI professionals and academics to step up to dispel this on-going fear mongering myth. We don’t want the situation to get out of hand like it has with the small faction of climate change deniers who get equal billing to the overwhelming majority of actual climate scientists who acknowledge the problem. We need to have the science and scientists stand as a beacon for reality.

For those still holding dear to the Killer AI notion, I suggest you take a class in machine learning. I recommend Andrew Ng’s Stanford University class available on Coursera – “Machine Learning.” This class will give you a good introduction to machine intelligence, in particular the section on neural networks. You’ll be able to program your own algorithms and understand the basics of the mathematics behind statistical learning. Once you get that level of familiarity, your fears will fade away guaranteed!

Contributed by: Daniel D. Gutierrez, Managing Editor of insideBIGDATA. He is also a practicing data scientist through his consultancy AMULET Analytics. Daniel just had his most recent book published, Machine Learning and Data Science: An Introduction to Statistical Learning Methods with R, and will be teaching a new online course starting on Jan. 13 hosted by UC Davis Extension: Introduction to Data Science.

Contributed by: Daniel D. Gutierrez, Managing Editor of insideBIGDATA. He is also a practicing data scientist through his consultancy AMULET Analytics. Daniel just had his most recent book published, Machine Learning and Data Science: An Introduction to Statistical Learning Methods with R, and will be teaching a new online course starting on Jan. 13 hosted by UC Davis Extension: Introduction to Data Science.

Sign up for the free insideBIGDATA newsletter.

You are neglecting a couple of key points. One AI is much more then just data analytics and neural networks. Two no your code may not cause it to jump the tracks but someone else’s that wants it to do just that and cause problems could.So at present the danger lies not in the AI but the one’s writing the code.

If true AI were achieved then you would have a problem as it would write it’s own code, it would no longer be controllable. It would not be limited to a body and It would have control of a lot of things that computers run now. We are not close to true AI right now which would end up being much more then the present day deep learning, machine learning, neural networks, cognitive computing and natural language processing we have today.

But your point is equivalent to hacking; someone purposefully making changes to code for evil intent. That’s is not sentience, or Killer AI. That is human interference. Your reference to “true AI” exists only in SciFi.

There is an inherent conflict of interest in AI researchers acknowledging concerns about possible success in AI. Also, there is a strong tendency to say that something can’t be done when you personally don’t see how to do it. The people who were always most vocal in predicting the end of Moore’s law where the lithography engineers themselves. Most engineers did not personally see how to get over the next hurdle. As a reasonably prominent AI researcher myself, I think it is hubris to claim to know what cannot be done.

The conflict of interest excuse is kind of worn. It is used all the time, like with climate change researchers; oh they’re just raising concerns to pad their grants. I know too many machine learning researchers to know they’re not hiding Killer AI concerns to protect their research funding. But you seem to have done original research in the field. As an expert, please explain how a piece of R or Python code and suddenly become self-aware and start taking over. Sentience is a huge leap of faith.

Generaly danger comes from our own stupidity, not from the intelligence of others. Instead of being afraid of an hypothetical threat in the not so near future we should be concerned today by the development of military robots for instance. Does any such robot really need to be intelligent to be a threat for human life and for peace?

I never liked the term “AI” or “Artificial Intelligence.” I have been on the leading edge of robotics, machine vision and what is now called machine learning since the mid 80s. Much of my work has been secret and therefore unpublished. Even so, there is sufficient public information to prove my lengthy and deep experience in this field. Daniel Gutierrez is correct in his assessment of the threat from AI. There is no evidence of any real threat from AI. I would go even further and say there is no evidence of real intelligence in AI. Sci-fi is fun. Try to remember that the “fi” stands for fiction. https://www.linkedin.com/in/kenneth-w-oosting-7a663a6/