Newly emerging AI technologies pervade every industry: game-changing products and services like voice controlled devices, autonomous vehicles, and even cures for illness, are here or on the horizon. Organizations that are AI-driven are making their products more intelligent and optimizing processes like operations and decision making. These capabilities are transforming industries and revolutionizing business.

Deep Learning (DL) is at the epicenter of this revolution. It is based on complex neural network models that mimic the human brain. The development of such DL models is extremely compute-intensive and has been enabled, in great measure, by new hardware accelerators that satisfy the need for massive processing power. Organizations are investing heavily in bringing AI accelerators into their data centers or using them on the public cloud, but continue to struggle with the cost-effective and efficient management of these critical resources.

This white paper by Run:AI (virtualization and acceleration layer for deep learning) addresses the challenges of expensive and limited compute resources and identifies solutions for optimization of resources, applying concepts from the world of virtualization, High-Performance Computing (HPC), and distributed computing to deep learning.

The 14 page whitepaper includes the following compelling topics:

- Deep Learning Meets the Enterprise

- The Run:AI Solution – Simplifying GPU Management and Machine Scheduling

- Run:AI in Action – Case Study

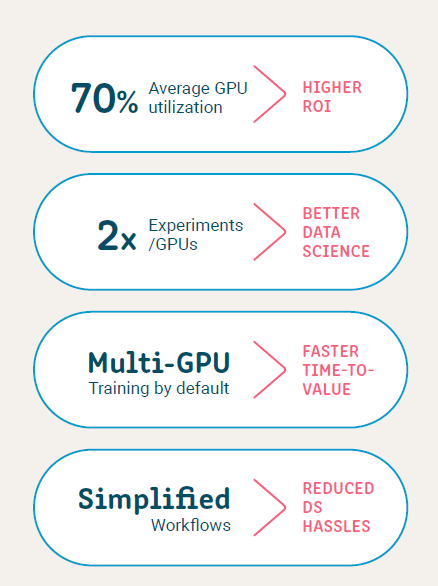

The Run:AI software solution decouples data science workloads from hardware by defining two task types according to two distinct workloads – a smaller build type and a larger train type, assigning limited GPU resources to the smaller type and guaranteeing unlimited resources to the larger. By pooling resources and applying an advanced scheduling mechanism to data science workflows, with guaranteed quotas for priority jobs, Run:AI customers can increase the number of experiments they run, speed time to results, and ultimately meet the business goals of their AI initiatives.

Download the new white paper courtesy of Run:AI to explore how the steep increase in the application of Deep Learning to practically every vertical is bringing to bear a dramatic need for increased computing power.

Speak Your Mind