In this special guest feature, Sahar Mor, founder of AirPaper, discusses DALL-E – a new powerful API from OpenAI that creates images from text captions. With this, Sahar is planning to build a few products such as a chart generator based on text and a text-based tool to generate illustrations for landing pages. Sahar has 12 years of Engineering + Product Management experience, both focused on products with AI in their core. Previously he worked as an Engineering Manager in early-stage startups and at the elite Israeli intelligence unit – 8200.

Several months ago OpenAI published their latest research model DALL-E – an advanced neural network that generates images from text prompts and a natural progression of its powerful language model GPT-3. Furthermore, OpenAI has been recently signaling it will soon open API access to DALL-E in a closed beta phase.

Undoubtedly, this API release will generate many intriguing applications, but even before that, DALL-E is already a stepping stone in AI research, unlocking promising new directions with its combination of multiple models such as text and vision.

The Transformers revolution

DALL-E is a transformer language model built based on the Transformers architecture, a neural net that specializes in sequence-to-sequence tasks, including ones that have long-range dependencies such as long articles. This lends itself to domains such as language and computer vision, where dependency occurs between words and pixels.

DALL-E receives both the text and the image as a single stream of data and is trained using maximum likelihood to generate all of the subsequent tokens, one after another. To train it, OpenAI has created a dataset of 250 million text-image pairs collected from the internet.

The model demonstrated impressive capabilities. For example, it was able to draw multiple objects simultaneously and control their spatial relationship:

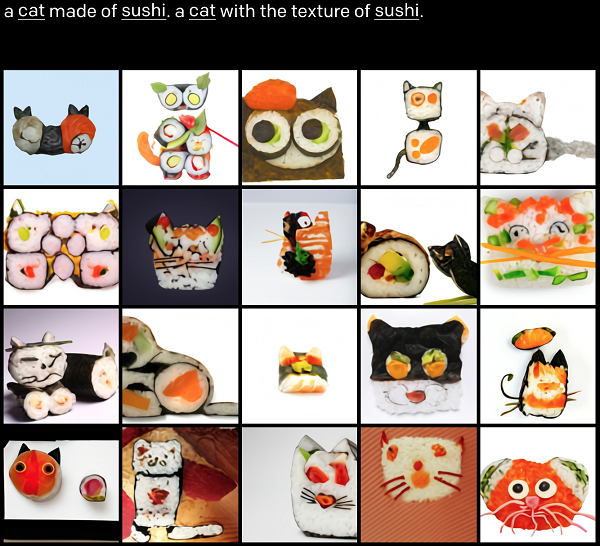

In another example, it has generated animals synthesized from a variety of concepts, including musical instruments, foods, and household items:

These images are 100% synthetical and are created in DALL-E’s deep mind of 12 billion parameters. Unfortunately, with the lack of a standardized benchmark to measure its performance, it’s hard to quantify how successful this model is in comparison to previous GANs and future image generation models.

Nevertheless, DALL-E’s impressive performance is thanks to its multimodal nature, where both textual and visual data are being used to train the underlying neural net. Multimodality is becoming popular again in days where most AI applications’ performance has plateaued, with the usage of only one type of data, e.g. text, to build artificial intelligence proving itself as jumping too short.

This perception is becoming increasingly popular, with Oreilly’s recent Radar Trends report marking multimodality as the next step in AI and other domain experts such as Jeff Dean (Google AI SVP) sharing a similar view.

General-purpose models

By now it’s a common understanding that narrow AI is not merely an equivalent to human intelligence, and in many cases is prone in its ability to generalize. As an example, even the state-of-the-art deep learning model for early-stage cancer detection (i.e. vision) is limited in its performance when it’s missing patient’s charts (i.e. text) from her electronic health record system.

On the promise of combining language and vision, OpenAI’s Chief Scientist Ilya Sutskever stated that in 2021 OpenAI will strive to build and expose models to new stimuli: “Text alone can express a great deal of information about the world, but it’s incomplete because we live in a visual world as well”. He then adds “this ability to process text and images together should make models smarter. Humans are exposed to not only what they read but also what they see and hear. If you can expose models to data similar to those absorbed by humans, they should learn concepts in a way that’s more similar to humans”.

Multimodality has been explored in the past. The main reason it wasn’t more widely researched and used is due to its shortcoming of picking up on biases in datasets. This can be solved with more data, which is becoming increasingly more available, but more importantly – with other novel techniques that enable more robust learning from less data.

These realizations are leading to interest picking up in multimodal research during the last several years. One example is Facebook’s AI Research lab (FAIR) recent papers outlining novel approaches for automatic speech recognition (ASR), showcasing major progress by combining audio and text.

DALL-E exhibiting promising results within less than a year since GPT-3’s release is great news to AI research and artificial general intelligence (AGI). The way it was built by leveraging multiple ‘senses’ is as exciting and gets OpenAI one step closer to its promise of building sustainable and safe AGI. It also creates endless new streams of research that will hopefully yield many more promising papers and applications of AI in the near future.

But more than anything, and once released – we can all expect our Twitter feed to once again be filled with mind-blown people in front of mind-blowing gifs, just this time with artificially generated images rather than artificially generated text.

Sign up for the free insideBIGDATA newsletter.

Join us on Twitter: @InsideBigData1 – https://twitter.com/InsideBigData1

Very informative thank you for sharing.

Multimodal AI is definitely going to be mainstream given that big tech companies are also entering the domain—see Google’s recent LaMDA announcement at the recent Google I/O. Big tech equals massive funding. And massive funding might lead to mind blowing breakthroughs.

Looking forward to it and thanks for the great read!

Multimodality is already growing in popularity – Deep Mind’s recent release of Perceiver is exactly that.

Those are exciting times to live in; Thanks for the interesting and concise piece.